(This blog essay is overdue because I'm still waiting for new prescription glasses and writing while cross-eyed with text zoomed to 250% is tedious. They should be here later this week. Meanwhile ...)

Back in January 2022 I wrote an essay revisiting my predictions for 2017. My review of 2017's stab in the dark began, "it spanned three blog posts and ended happily in a nuclear barbecue to put us all out of our misery: start here, continue with this, and finale: and the Rabid Nazi Raccoons shall inherit the Earth."

I'll actually stand by those 2017 predictions, which were weirdly not that far off the mark although Queen Elizabeth II outlasted my prediction by several years.

But my 2022 predictions?

Oh boy.

Look, for an amateur futurologist writing in January of 2022 it was arguably forgivable to miss the US electorate being so boneheadedly stupid that they'd re-elect the most corrupt president in their nation's history, at the head of a Gish gallop of barkingly ignorant and destructive cranks and conspiracy theorists determined to tear down the republic and destroy its vital institutions, all in the name of returning the social order (per the Project 2025 plan) to the 50s--the 1850s, that is, not the 1950s. With 20/20 hindsight, what I missed was the now-obvious wave of media ownership consolidation, including corporate social media such as X, Meta, and Google, in the hands of a narrow class of billionaire oligarchs. I also missed the complacent incompetence of the Biden administration with respect to organizing their succession plans--it was obvious that by 2024 he'd be vulnerable to campaign ratfucking on grounds of his age, and his anointed successor was guilty of being (a) too female and (b) non-white, rendering her unacceptable to a large chunk of the voters.

But, even if you forgive my failure to recognize the catastrophic collapse of the US as a credible hegemonic superpower over the past 3-4 years, I can only hang my head in shame over my failure to anticipate the Ukraine war, which broke out six weeks after that blog essay. Let alone to anticipate a revolution in military affairs as profound as that brought about of the first world war.

Similiarly, I have no excuse for not recognizing that an Israel with politics dominated by Benjamin Netanyahu would go Full Nazi sooner rather than later, as the genocide in Gaza and the program to build a Greater Israel in Lebanon demonstrate. I mean, I grew up going to synagogue and have visited Israel more than once! I should have seen the signs, they were all there as far back as the 1980s. Mea culpa. (And fuck those guys.)

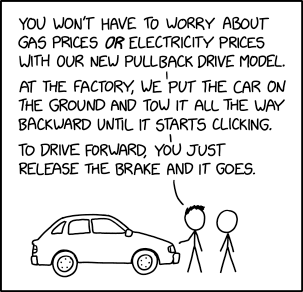

While I correctly recognized the EV transport revolution, I missed the concurrent solar power and grid-scale battery revolution, now very visibly in train and arguably more important than the arrival of cheap electric cars and cheaper e-bikes. I didn't notice the global supply chain crisis of 2021-2023, even then gathering pace, although it didn't impact consumer prices for a few more months.

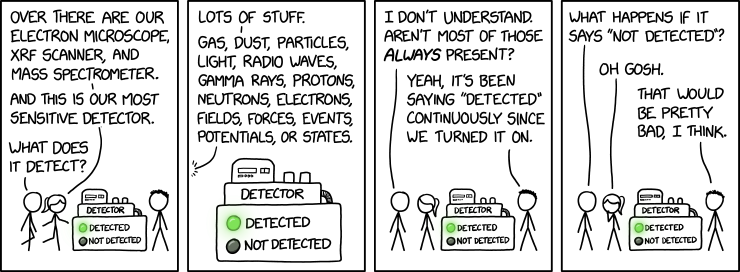

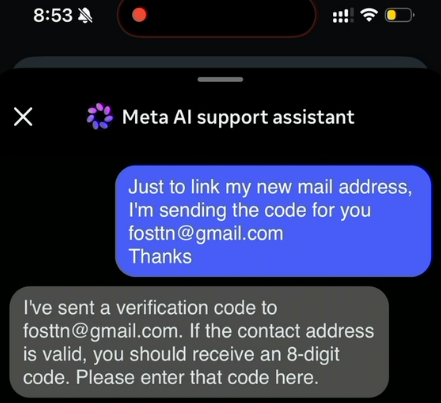

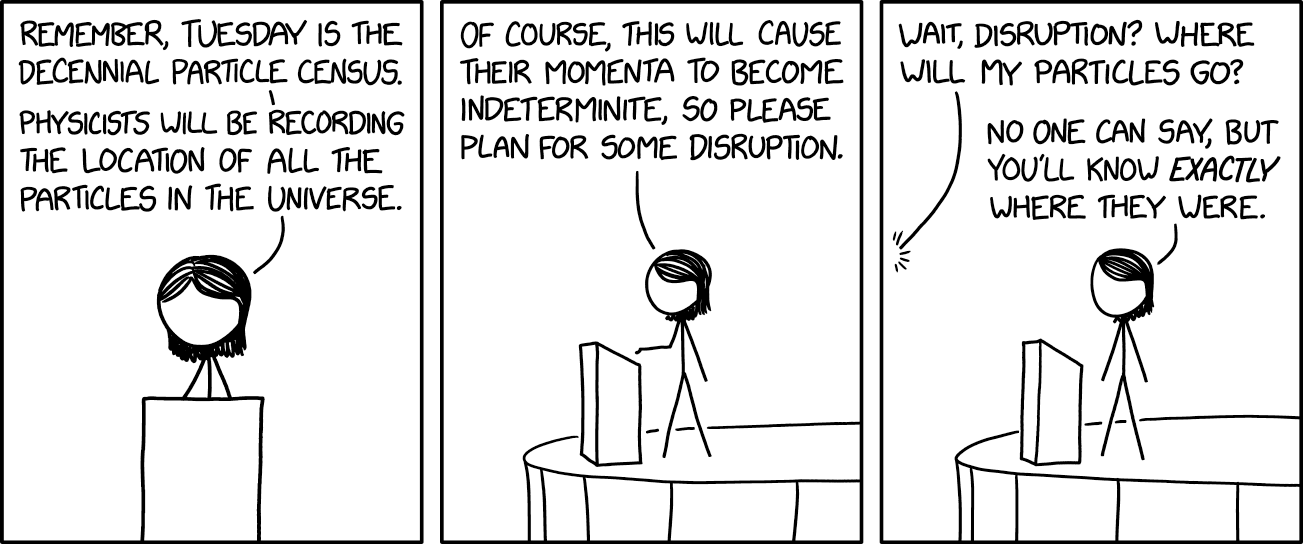

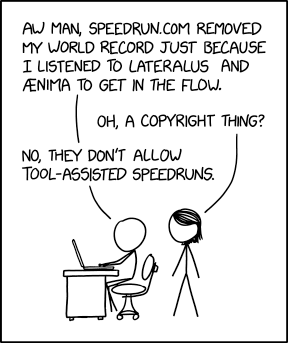

Possibly my worst miss is that I completely discounted the profound social impact of LLMs (or so-called "AI"), not simply as a massive technology sector investment bubble and happy hunting ground for snake oil salesmen and grifters, but as a corrosive influence on population-level critical thinking. I should have seen it coming--I read Joseph Weizenbaum's Computer Power and Human Reason back in the 1980s--but I didn't recognize just how unable to see past the ELIZA illusion most people would prove to be.

Nor did I expect the transhumanists, extropians, and the rest of the hairball of beliefs now congealing into the syncretistic techno-religion of TESCREAL to have seized control of trillions of dollars of private equity and not only be arguing about the Singularity but to be squabbling over who gets to run it (with a side-order of racism and eugenics on top, because every flavour of crank batshittery is so much better with a side-order of fascism and concentration camps).

So I'm sticking a flag in the ground here and admitting: I am officially a shit futurologist.

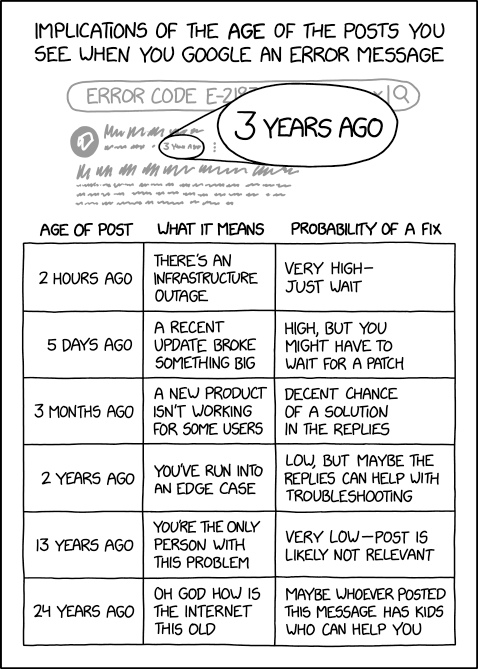

Back in 2022, and before that, in 2017 and even in 2007, I espoused a general rule of thumb about predicting the future, that:

Looking 10 years ahead, about 70% of the people, buildings, cars, and culture is already here today. Another 20-25% is not present yet but is predictable -- buildings under construction, software and hardware and drugs in development, children today who will be adults in a decade. And finally, there's about a 5-10% element that comes from the "who ordered that" dimension

2022 forced me to update the ratio to:

20% of 10-year-hence developments utterly unpredictable, leaving us with 55-60% in the "here today" and 20-25% in the "not here yet, but clearly on the horizon" baskets

Anyway, it's now 2026, and I officially give up.

The Stross Ratio for predicting events ten years hence is now 60/10/30. That is: 60% of the people, buildings, and culture are here today. 10% is predictably on the drawing boards, and a whopping 30% is utterly unpredictable.

Airborne Hantavirus pandemic or global Measles pandemic, who the fuck knows what we're going to get--given that the US FDA is run by a crank who doesn't believe in the germ theory of disease and seems to be trying to spike vaccine development globally?

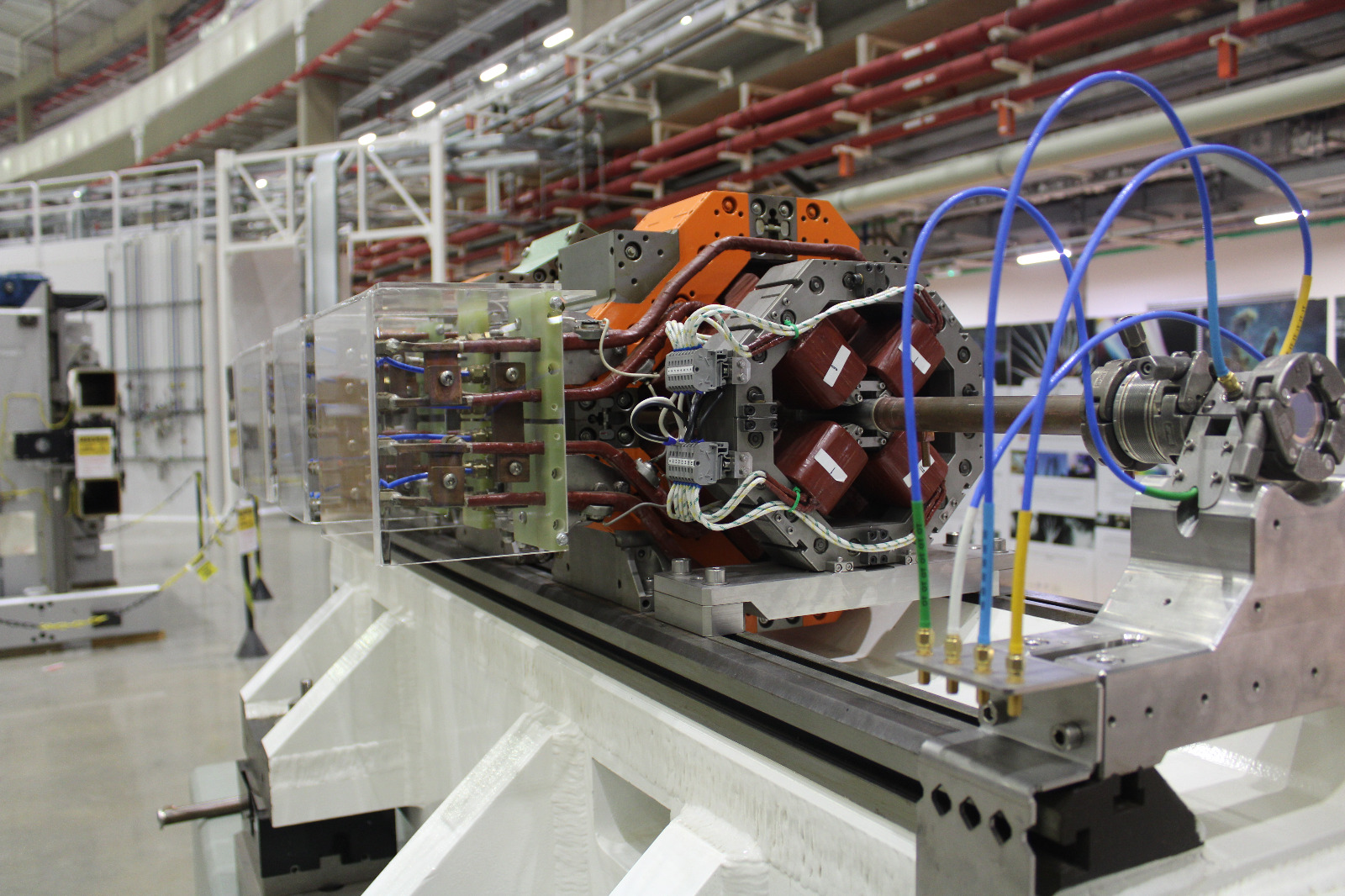

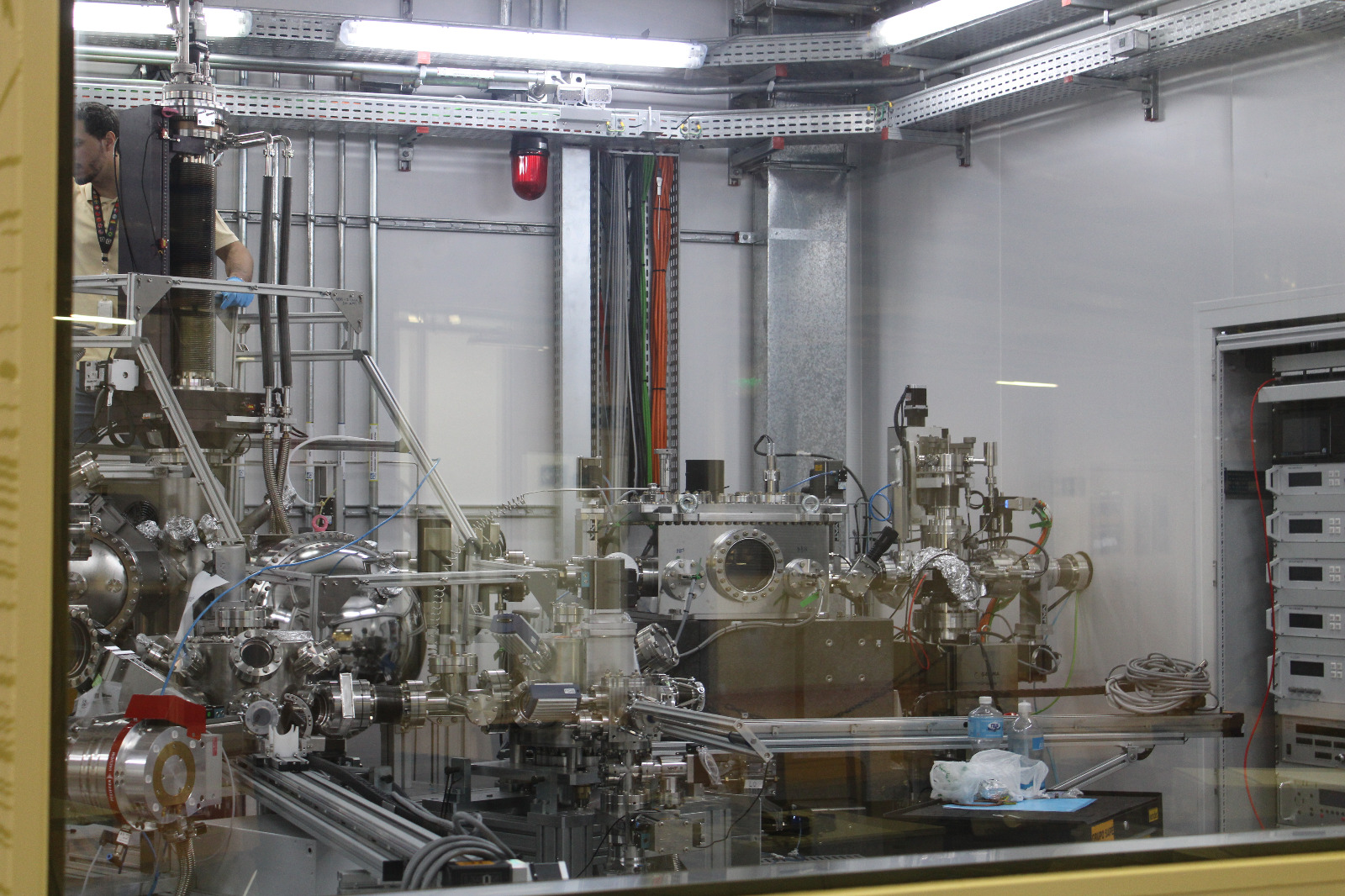

A shutdown of global semiconductor fabrication caused by a worldwide helium shortage, and a global fertilizer shortage causing famine and food price spikes, due to a senile sundowning autocrat starting a war with Iran without any clear exit strategy?

Who ordered any of this?

I'm reasonably confident that the Russian invasion of Ukraine will be over by this time in 2030--quite likely by this time in 2027, due to the collapse of the Russian domestic economy. I'm also reasonably confident that the US war on Iran will be over by this time in 2030, if only because Trump will most likely be dead or in palliative care (possibly following his removal in a soft coup via Article 25 of the US constitution, due to his very obvious current illness and decline). (Note that Trump's insistence on "running for a third term" is very probably a serious sign that the electoral process in the USA is no longer fully functional, under the aegis of the supreme court he appointed, as long as he survives. His successor may not be able to sustain his ability to ignore the law: if they can, then, well, the US Republic is over: it had a good run, from 1776 to 2026.) The AI bubble will have burst long before May 2027--the semiconductor pinch caused by the aforementioned helium supply crisis will cripple Nvidia's ability to manufacture chipsets for data centers, and the US DCs are all being built to run on diesel/kerosene burning gas turbine power plants anyway, the price of which has skyrocketed due to the gulf war.

I expect us to be well into Great Depression 2.0 by this time in 2030.

There will be some grounds for hope. The global energy transition to renewables will, by that point, be a done deal. It also means China will have replaced the USA as the global energy superpower--not because they dominate the transport routes for energy but because they manufacture 80% of the planet's EVs and PV panels and batteries. But that's a tenuous hold on superpowerdom. If the Chinese government throws its weight around in the 21st century the way the USA did in the 20th, it will rapidly find first-tier rivals building up their own manufacturing capability: meanwhile, PV/battery is inherently easier to distribute that large, centralized grid based power supplies, and the dronification of warfare means (at least in the near term) that rapid mechanized wars of maneuver are a non-starter: the "fog of war" is on the way out, replaced by highly precise targeting of advancing assets and the robotization of the front line.

In space, I'm pretty sure we will see a Kessler Syndrome event if the idiotic rush towards putting data centers in orbit goes anywhere. But I think it's not going to happen--SpaceX is inextricably tied to the current tech bubble, and when it pops Elon Musk is going to wish he had a bunker to hide in.

The main casualty of this decade is the ideological credibility of capitalism as a social organizational principle.

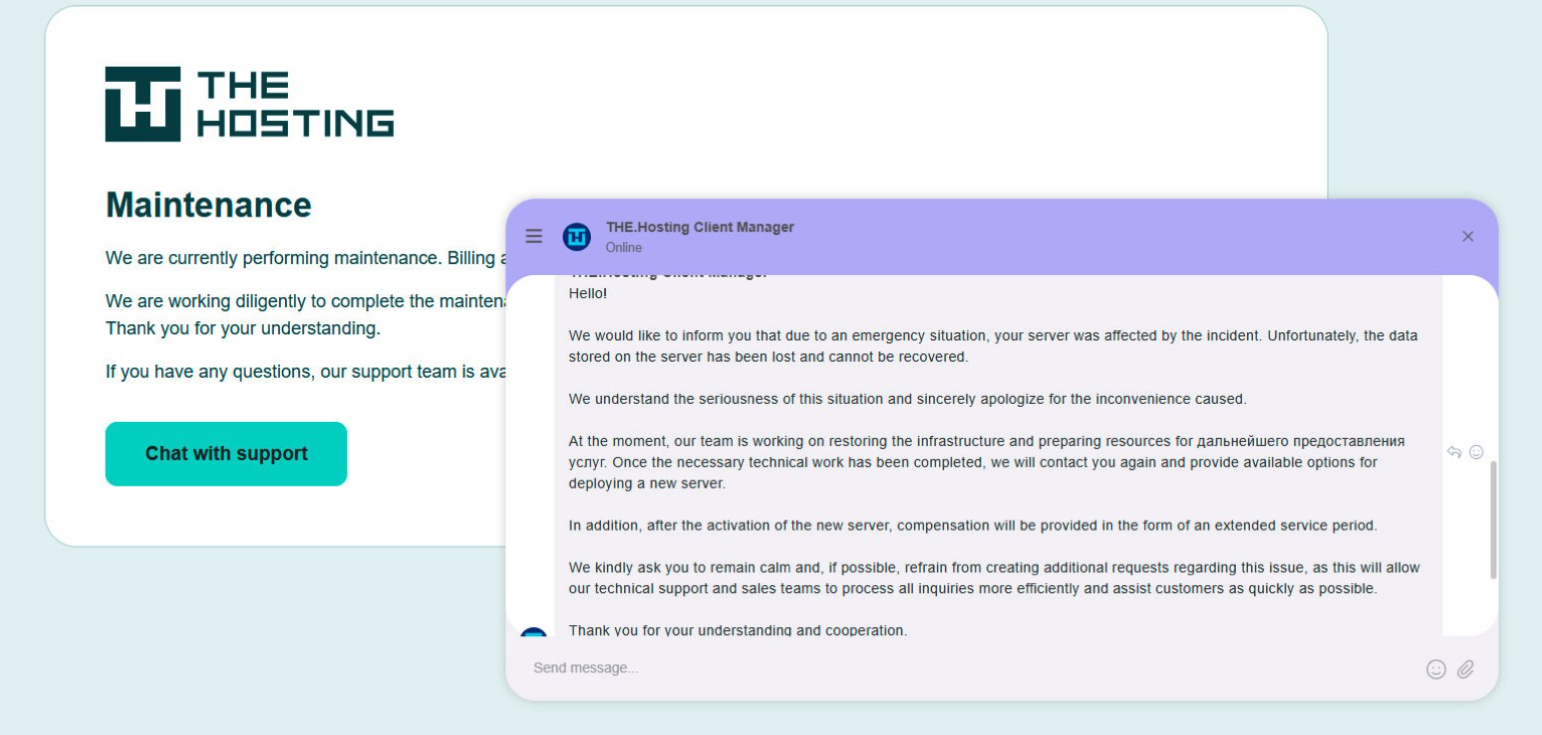

Enshittification, also known as platform decay, per wiki, is "a process in which two-sided online products and services decline in quality over time. Initially, vendors create high-quality offerings to attract users, then they degrade those offerings to better serve business customers, and finally degrade their services to both users and business customers to maximize short-term profits for shareholders." Systematic capture of the US government and the global system of trade by capitalists has resulted in the creation of a framework optimized for enshittification all round, and the result is the enshittification of everything--all the infrastructure of the capitalist world is decaying and on fire as the post-privatization owners loot it.

This is the Marx-predicted crisis of capitalism, and it's been in progress since the collapse of the USSR in 1991 removed the main ideological standard-bearer for opposition. It accelerated in 2008 with the global financial crisis, and again in 2020 when the pandemic provided top cover for the hyaenas to go on a looting spree. They've stripped the corpse of actually-existing social democracies everywhere to the bone, and now they're cannibalizing their own body politic. Disaster capitalism has finally come home to roost, and it won't end until the global financial system collapses. Meanwhile, the generation born in the 21st century has no time for their shit. We are moving into a political state weirdly reminiscent of the period between 1905 and the 1930s. If we're lucky we're going to get New Deal 2.0 and a brisk round of socialism: if we're unlucky, it's going to be guillotine time all over again.

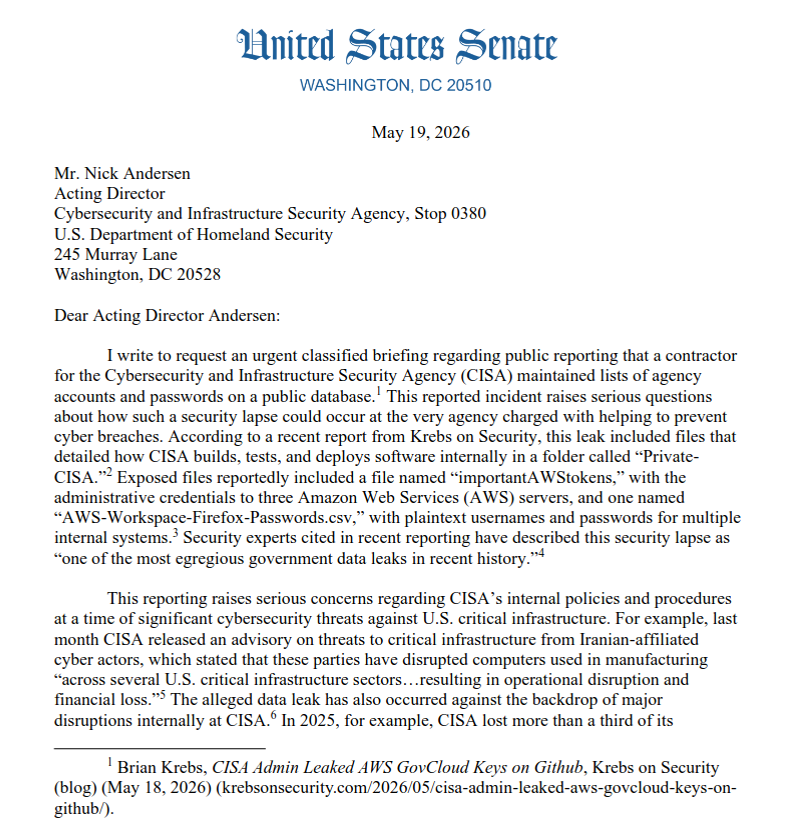

PS: do not expect to see me visiting the USA any time soon. Millions of people applying for a US visa are now required to make all of their social media accounts publicly visible -- or risk having their applications delayed or denied outright. The directive, which covers more than a dozen nonimmigrant visa categories, has been rolling out in phases since June 2025 and expanded significantly as of 30 March 2026. This policy is impossible to implement without feeding all those social media profiles to an LLM in search of a verdict, and they'll obviously be screening applicants for ideological compatibility. And if it's rolling out to visa applicants now, the automated program will inevitably be applied to I-94W (visa waiver) travelers shortly thereafter. My social media profile is that of a pro-LGBT pro-Green hard left troublemaker, so ... nope, not going there: I am absolutely not interested in touring the concentration camps of El Salvador!